Exploring Data Efficient 3D Scene Understanding

with Contrastive Scene Contexts

Ji Hou1 Benjamin Graham2 Matthias Nießner1 Saining Xie2

1Technical University of Munich 2Facebook AI Research

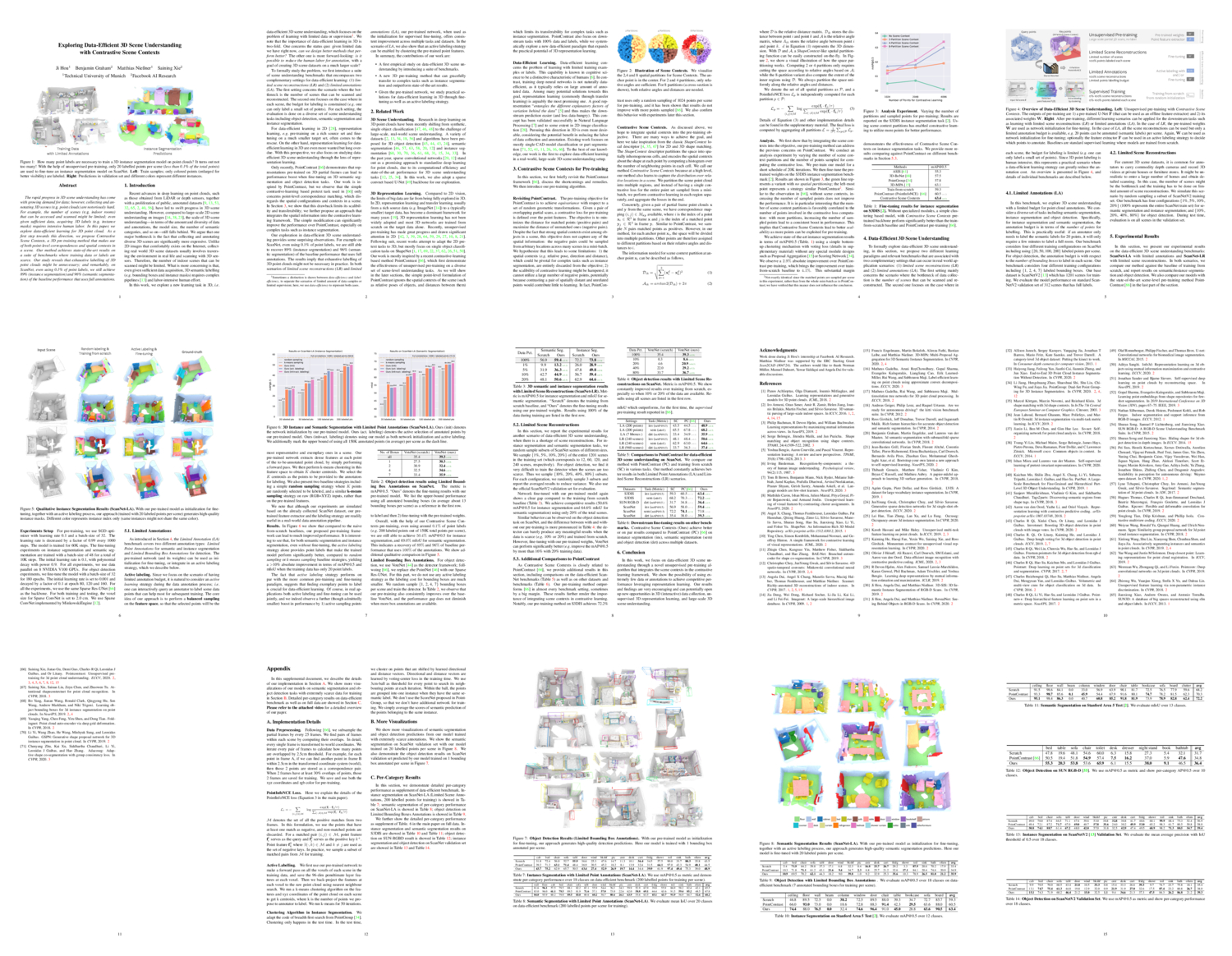

In this paper, we explore data-efficient learning for 3D point cloud. As a first step towards this direction, we propose Contrastive Scene Contexts, a 3D pre-training method that makes use of both point-level correspondences and spatial contexts in a scene. Our method achieves state-of-the-art results on a suite of benchmarks where training data or labels are scarce. Our study reveals that exhaustive labelling of 3D point clouds might be unnecessary; and remarkably, on ScanNet, even using 0.1% of point labels, we still achieve 89% (instance segmentation) and 96% (semantic segmentation) of the baseline performance that uses full annotations.

Benchmark

We introduce the benchmark for data-efficent 3d scene understanding of various mainstream tasks.

Challenge coming soon ... stay tuned.

Video

Publication

Paper - ArXiv - pdf (abs) | GitHub (coming soon)

If you find our work useful, please consider citing it:

@article{hou2020efficient,

title={Exploring Data-Efficient 3D Scene Understanding with Contrastive Scene Contexts},

author={Hou, Ji and Graham, Benjamin and Nie{\ss}ner, Matthias and Saining Xie},

journal={arXiv preprint arXiv:2012.09165},

year={2020}

}

Contact

If you have questions regarding our project, please contact us directly by email: ji.hou at tum.de